-

Welcome to Talking Time's third iteration! If you would like to register for an account, or have already registered but have not yet been confirmed, please read the following:

- The CAPTCHA key's answer is "Percy"

- Once you've completed the registration process please email us from the email you used for registration at percyreghelper@gmail.com and include the username you used for registration

Once you have completed these steps, Moderation Staff will be able to get your account approved.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Articles that don't fit anywhere else

- Thread starter Büge

- Start date

I've never read fic and have only watched a scant few episodes of the anime in recent adulthood, so the Akane in my head is sourced exclusively from the manga, from the first 14 volumes or so that I've had for most of my life. When I was a young child I think it was her I imprinted upon and kept reading for, and rooted for in every instance where the characters were set up at odds against one another. Some things about Ranma ½ frustrate me but she's among the other stuff that makes me want to track down the rest of the volumes and story I've never interacted with.

jesus christ @Büge why you do this now i have a million things to say and that article is absolutely brilliant and i've been meaning to do a super-deep dive into a literary analysis of the manga and now i want to say everything right here and right now about personal and popular interpretations of akane's character but i'm at work and i already spent a bunch of time reading and rereading that article and i have class after work and aaaaaaaaaaaaa good article though i wanna reply to it at some point

EDIT: OH OF COURSE Talen wrote it yeah i should have figured so now i have stuff to say here and stuff i want to reply to talen on twitter and augh why isn't the day 30 hours long

EDIT: OH OF COURSE Talen wrote it yeah i should have figured so now i have stuff to say here and stuff i want to reply to talen on twitter and augh why isn't the day 30 hours long

Good essay! I definitely recognize all those Akane from back in the day. (I have decided that the plural of Akane is 'Akane' because 'Akanes' looks weird.) Not the mention the whole broader realm of "fanfic canon" that inevitably sprung up around any sufficiently popular series. I wonder if that's still as much of a thing today. Obviously people still write fanfic, but I fell like it doesn't loom nearly as large in the overall fan consciousness of a work now as it did when text was the only practical form of media to share over the internet.

muteKi

Geno Cidecity

This is probably a good place to put this, an article I saw via Twitter by researcher David Klein:

www.psychologytoday.com

www.psychologytoday.com

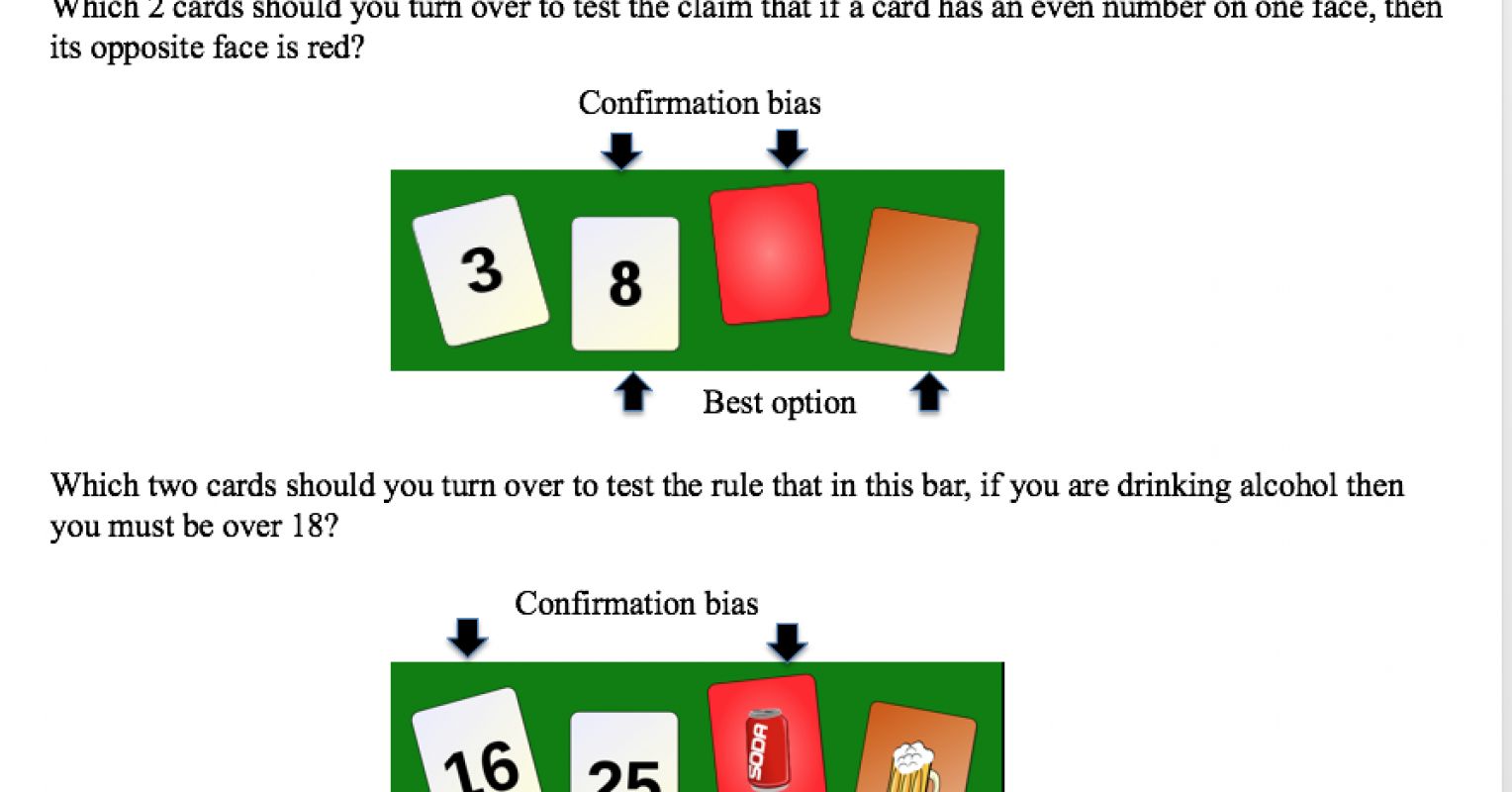

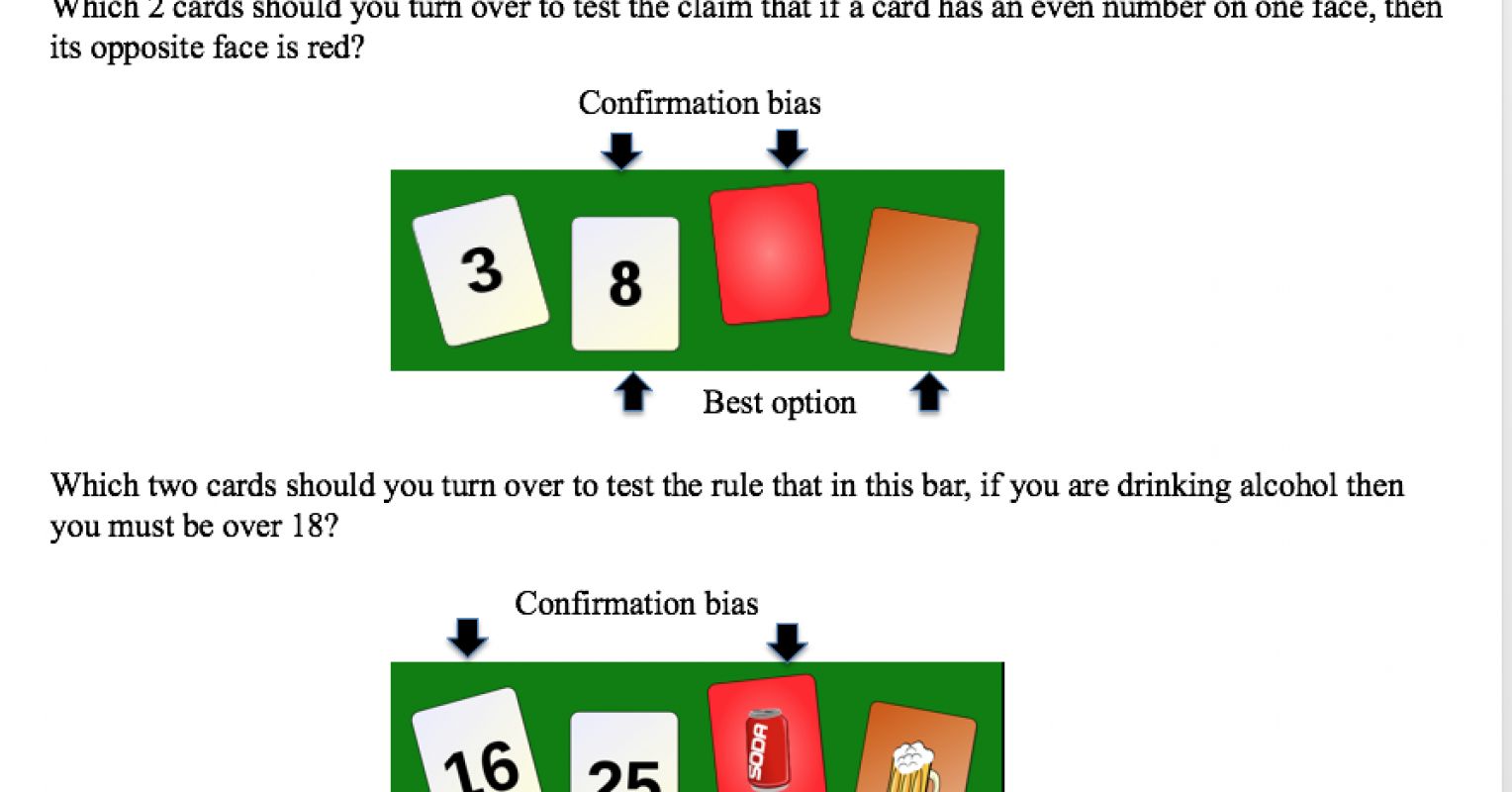

The basic summary of this is: our model/concept of 'confirmation bias' is lacking because it isn't a complete picture of knowledge synthesis. What we call 'confirmation bias' is more common in early stages of knowledge gathering where there exists some data that may or may not have a pattern in it. These first steps of confirmation bias are an attempt to actually generate a pattern for the purpose of the user, and then are usually followed-up with attempts to generate counter-examples of that pattern. Experiments support this, with less-abstract examples of pattern-derivation being less subject to confirmation bias, like matching a rule for cards vs. matching a concrete rule about drinking modeled by cards.

The Curious Case of Confirmation Bias

Confirmation bias is frequently cited as a reason why people make poor judgments. However, it rests on three claims that turn out to be very questionable.

The basic summary of this is: our model/concept of 'confirmation bias' is lacking because it isn't a complete picture of knowledge synthesis. What we call 'confirmation bias' is more common in early stages of knowledge gathering where there exists some data that may or may not have a pattern in it. These first steps of confirmation bias are an attempt to actually generate a pattern for the purpose of the user, and then are usually followed-up with attempts to generate counter-examples of that pattern. Experiments support this, with less-abstract examples of pattern-derivation being less subject to confirmation bias, like matching a rule for cards vs. matching a concrete rule about drinking modeled by cards.

Lady

something something robble

This is interesting food for thought. I think one of the complaints/perceived dangers of "confirmation bias" is whether people stop after confirming their initial bias. Taking the expanded data from the Wason study, did the 6/29 people who failed to guess the correct rule continue trying? What would have happened if it was a study where the participation had some barrier to entry that would weed out unmotivated participants? (E.g. conducting it over repeated visits or via email with long times between a guess and validation) How many would be content to never know the correct rule after a few of their guesses had been dismissed? What if there was *no* validation? How many would continue searching?

I think those situations would describe better what we think of nowadays as "confirmation bias" -- the person on facebook who finds one article that they agree with and share immediately. There is no immediate validation-- no one is in a position to say that their guess is absolutely right or wrong, they only have their friends who they may or may not trust as authorities on the subject. There may be little motivation to continually search for the correct answer in the face of failure.

I think the article makes a good point when it tries to steer away from the conclusion that trying to validate your hypotheses before trying to invalidate them is baddd. You need both kinds of data, and it doesn't particularly matter what order you test them in, positive or negative. However, I think that if you only perform one side of the test, then that is confirmation bias working and it is misleading or dangerous.

For example, in QA if you are looking for text to appear on the page in a certain context, you set up the context, and the text appears, then that looks like a passing test, right? You also need to test that the text does not appear when that context is not present. You cannot confidently say that the context was the factor causing the text to show up unless you test that without it, the text does not show up. In the example above, perhaps the logic that calculates the context is faulty, e.g. it always returns that the context is true, and you would not know this unless you checked the scenario without that context in place. There were many times in QA where some test would pass, but it only passed because a second bug was present that obscured the other. Using the example above, it might be that the text would appear in that context, but it might turn out that the logic determining that the context was there was not even connected to the text at all, so that the text would appear all the time. In that case, you cannot say that the logic is correct or not because it is completely decoupled from the behavior you're observing.

Your average QA is fairly unmotivated; it is a high stress, low wage, and frequently disrespected job, or at least, that is the impression I've gotten from my time in the industry. These are certainly the kinds of complaints that I would hear from the people I worked with. When training new QA, them stopping at checking the positive test worked was a pattern that I saw over and over. I couldn't say whether it was more on par with the 6/29 who were unable to guess a numerical rule after 5 tries, or with 16/29 who were unable to guess after 2 tries, or 23/29 who were unable to guess after 1 try. I think it would be worthwhile to try to find out.

I think those situations would describe better what we think of nowadays as "confirmation bias" -- the person on facebook who finds one article that they agree with and share immediately. There is no immediate validation-- no one is in a position to say that their guess is absolutely right or wrong, they only have their friends who they may or may not trust as authorities on the subject. There may be little motivation to continually search for the correct answer in the face of failure.

I think the article makes a good point when it tries to steer away from the conclusion that trying to validate your hypotheses before trying to invalidate them is baddd. You need both kinds of data, and it doesn't particularly matter what order you test them in, positive or negative. However, I think that if you only perform one side of the test, then that is confirmation bias working and it is misleading or dangerous.

For example, in QA if you are looking for text to appear on the page in a certain context, you set up the context, and the text appears, then that looks like a passing test, right? You also need to test that the text does not appear when that context is not present. You cannot confidently say that the context was the factor causing the text to show up unless you test that without it, the text does not show up. In the example above, perhaps the logic that calculates the context is faulty, e.g. it always returns that the context is true, and you would not know this unless you checked the scenario without that context in place. There were many times in QA where some test would pass, but it only passed because a second bug was present that obscured the other. Using the example above, it might be that the text would appear in that context, but it might turn out that the logic determining that the context was there was not even connected to the text at all, so that the text would appear all the time. In that case, you cannot say that the logic is correct or not because it is completely decoupled from the behavior you're observing.

Your average QA is fairly unmotivated; it is a high stress, low wage, and frequently disrespected job, or at least, that is the impression I've gotten from my time in the industry. These are certainly the kinds of complaints that I would hear from the people I worked with. When training new QA, them stopping at checking the positive test worked was a pattern that I saw over and over. I couldn't say whether it was more on par with the 6/29 who were unable to guess a numerical rule after 5 tries, or with 16/29 who were unable to guess after 2 tries, or 23/29 who were unable to guess after 1 try. I think it would be worthwhile to try to find out.

Johnny Unusual

(He/Him)

Violentvixen

(She/Her)

Oh nice, I saw that doodle and meant to look it up and forgot. That is freaking cool.

Violentvixen

(She/Her)

My book club is reading The Old Capital by Yasunari Kawabata and the main characters are all in the fabric/kimono industry in 1950s-ish Kyoto. One of the other members wanted to know more about the chintz fabric that was mentioned in the book and found this article about the history of the fabric in Japan. I found it fascinating so I wanted to share.

I have to admit that until this article I thought chintz was a Yiddish word! Had no idea the fabric originated in India. Learned a lot.

I have to admit that until this article I thought chintz was a Yiddish word! Had no idea the fabric originated in India. Learned a lot.